To compete, innovate, and adapt in our digital world, your organization must identify, select, and retain high-quality technical talent well-suited to your business challenges. As Twilio CEO Jeff Lawson put it, “In today’s digital economy, it’s the companies that figure out how to build great software that can win the hearts, minds, and wallets of customers. And so that means unleashing the talent who builds software.”

It’s true. Technical talent best suited to your company’s objectives and requirements is a critical, competitive asset that can unlock your success.

High-quality skill evaluations are an essential part of making the best hiring decisions. With better hiring decisions, you will make fewer hiring mistakes, save valuable resources and add a productive member to the team. However, effective candidate evaluations must offer a positive experience for candidates (lightweight, relevant, and realistic) and accurately predict future job performance (comprehensive, in-depth, and reliable).

At first, it might seem difficult to fulfill both needs simultaneously. Fortunately, a concept called “construct validity” from the field of psychometrics can help balance these needs and bring significant benefits to your organization.

What is Construct Validity? Why Does it Matter to Effective Tech Hiring?

Construct validity is the evidence that an assessment’s desired interpretation is correct. As you might guess, there are tons of obstacles that get in the way of an assessment accurately measuring the construct its creators intended.

In the testing business, these obstacles are called construct validity “threats” and are grouped into two categories: missing important constructs (underrepresentation) and including the wrong constructs (irrelevant-variance). Samuel Messick, a leading validity theorist, put it this way – an assessment’s construct validity is threatened by “leaving out something that should be included….or including something that should be left out, or both” (p. 34).

Unfortunately, technical skill evaluations for hiring have lacked strong construct validity evidence. In the past, the main focus for roles like “software engineer” was to create accurate and efficient code within deadlines and budgets. Because of this, hiring managers naturally developed evaluations that imitated how they judged code accuracy and efficiency for general, theoretical problems.

This approach made sense back then due to the broad requirements of earlier technical roles and the need to quickly and effectively evaluate candidates’ code for correctness and efficiency, especially when there were a lot of candidates. However, things have changed. Feedback from candidates, evolving talent expectations, and technological progress have shown us that test creators need more than just intuition to make useful assessments. Companies must now improve their hiring evaluations by targeting a more specific set of skills and linking these skills directly to a job’s requirements.

An Example of Construct Validity in Technical Assessments

Let’s use a specific example to make this point more straightforward. Say that a deep-tech startup team of expert backend engineers creates a niche product with a novel technology. Now, the company needs to appeal to a broader audience by hiring front-end developers that can make a more user-friendly interface.

Suppose the backend team relies on an ad-hoc recruitment process. In that case, they will likely fall back on evaluating front-end dev candidates on what backend engineers know best: skills in creating efficient data structures and combinatorial algorithms. However, these skills aren’t relevant for most front-end dev jobs.

Later, the startup wants to increase back-end capacity by hiring more senior backend software engineers to work on core technology. Using an ad-hoc recruitment process that lacks construct validity, the staff focus their evaluations on topics related to the core technology (and rightfully so) but neglect that the larger team needs skills in communication, teamwork, distributing work, choosing the methodology, and negotiating the scopes of responsibility.

Recruiters hiring software engineers may be too focused on testing a proficiency level with a particular technology (e.g., Python) before project leaders even choose the implementation technology. Or, software engineering skill assessments may focus on creating new code while neglecting debugging or testing skills.

Two construct validity threats in this hiring process are failing to measure front-end or collaboration skills and demanding extensive knowledge of algorithms relevant to backend systems. These issues can seriously lower the quality of the team’s measurement. At best, a poor-quality assessment prevents the team from making a good hiring decision, which could give them a competitive edge. At worst, low-quality measures cause the team to make unfair, surface-level choices that have lasting adverse effects.

Where does this leave us? It’s time for technical hiring to embrace a skills-based approach when choosing what to evaluate and making decisions. Rather than stopping at technology names or vague technical terms (e.g., programming, data literacy), this approach calls for drafting a complete list of well-defined skills required for a given role, team, or project and intentionally using those to craft assessments.

What has Codility Done for Construct Validity in Our Assessments?

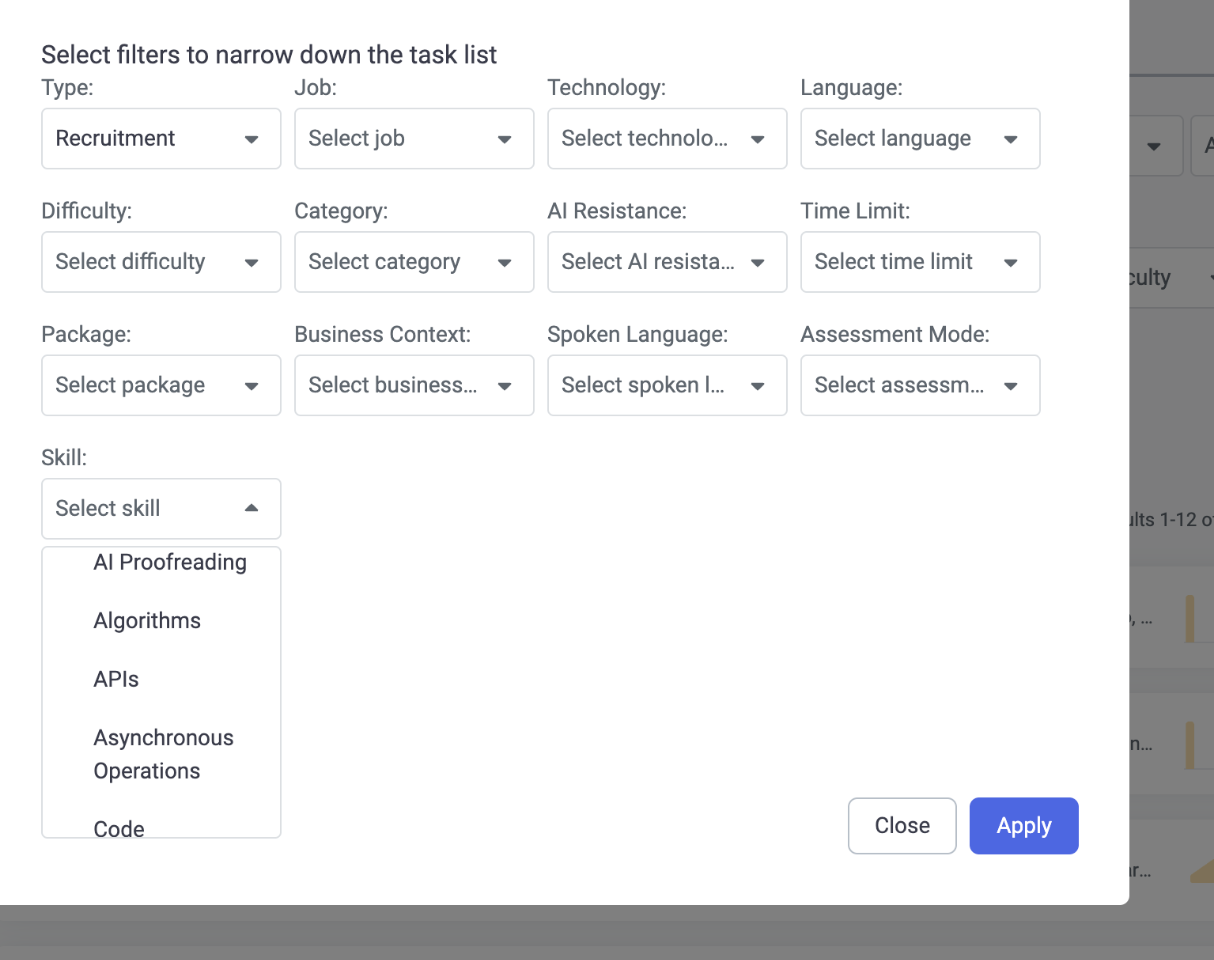

At Codility, I-O psychology acts as our guide to assessment practices with strong construct validity evidence. For example, we’ve built a system for filtering all our tasks by the skills they’re intended to measure beyond named technologies.

How did we identify the skills? Our I-O psychology team and nearly 50 subject matter experts defined the technical skills that apply across technologies and job families and mapped them to all of our tasks.

On top of that, we’ve rolled up technical skills measured in today’s task library under a comprehensive skills model, the Codility Engineering Skills Model (ESM). The ESM helps future-proof our thinking on the skills engineers need in an AI-assisted world. It also provides a foundation for new task types and scoring methodologies.

Taken together, this skills-based focus, already enabled in our products, help add greater construct validity to the assessments we help our clients build. Our focus on skills-based assessment development, skill mapping, and introducing new, more precise skill labels for tasks drives better evaluations with better candidate experiences, leading to better outcomes for our customers.

What’s in Your Technical Skill Evaluation?

Construct validity is a cornerstone tenet of quality measurement. Construct validity is threatened when test users fail to define what an assessment measures. It’s also threatened when test users don’t take the necessary steps to ensure an assessment reflects job requirements.

With that said, here are a few questions to answer: What’s in your technical skill evaluation? Have you identified the required skills beyond a named technology requirement? Are the evaluation criteria in your assessments aligned with the job requirements?

We’re committed to your success and want to give you the right tools for the job. Let’s work together to bring new talent to your organization at scale, verify the best talent for the role, find that new teammate that holistically fits with your team, project, and company, or benchmark your current team to discover skill gaps and create training plans based on data, not conjecture.

Want to see the skill filters firsthand? Book a demo of Codility today!