Detecting AI Cheating: Why Technical Assessment Integrity Matters More Than Ever

Resume reviews and unstructured interviews have been the foundation of technical hiring for a long time. We now know these traditional methods aren’t the most effective ways to predict future job performance, plus a new challenge has emerged to turn up the pressure further: the widespread usage of AI tools. Recent surveys show that nearly 9 in 10 students (88%) acknowledge using generative AI tools like ChatGPT for tests in 2025, compared to just over half (53%) in 2024.

The implications for technical hiring are clear. When candidates can easily generate high-quality answers, correctness alone is no longer a reliable indicator of skill.

That raises a critical question: does your technical assessment strategy generate the right integrity signals to distinguish genuine capability from AI-assisted output and support confident hiring decisions?

At Codility, we believe integrity is no longer optional, but foundational.

How Codility Protects Assessment Integrity

Modern technical hiring faces a growing trust problem. Public solutions, AI-generated code, and increasingly sophisticated cheating tactics make it harder than ever to know whether an assessment truly reflects a candidate’s skills. Codility’s approach to integrity is designed to address these real-world challenges without making assessments feel like surveillance.

Detecting AI-Generated Code

AI tools can generate working code in seconds, making surface-level correctness a poor signal of skill. Codility’s similarity detection compares submissions against a large body of historical and known AI-generated solutions to surface statistically unusual overlap for human review.

AI-Assisted Assessments and Interviews—On Your Terms

If your team embraces the use of AI, Codility has also integrated AI tools into our platform to help you evaluate how candidates collaborate with generative AI in a controlled environment that aligns with your organization’s requirements.

- AI tools for take-home coding assessments: Enable Cody, our built-in AI assistant, to see how candidates use AI to complete coding tasks. After they submit a solution, candidates can even receive AI-generated follow-up questions that probe their understanding and originality. Post-assessment, hiring teams can review the AI chat transcript and follow-up question responses.

- AI tools for online interviews: Interviewers watch in real-time as candidates use our AI Copilot, powered by multiple AI models, to write and refine code, just like they do in their day-to-day work. Every AI interaction is also recorded and included in the post-interview report for you to revisit later.

These tools allow teams to evaluate critical AI collaboration skills, including how candidates break down problems (computational thinking), craft effective prompts (prompt engineering), and critically evaluate AI-generated code for errors and alignment with requirements (AI proofreading).

By integrating AI assistance directly into assessments, Codility standardizes access to modern developer tools while enabling hiring teams to evaluate AI collaboration as a measurable competency.

Who’s Taking the Test?

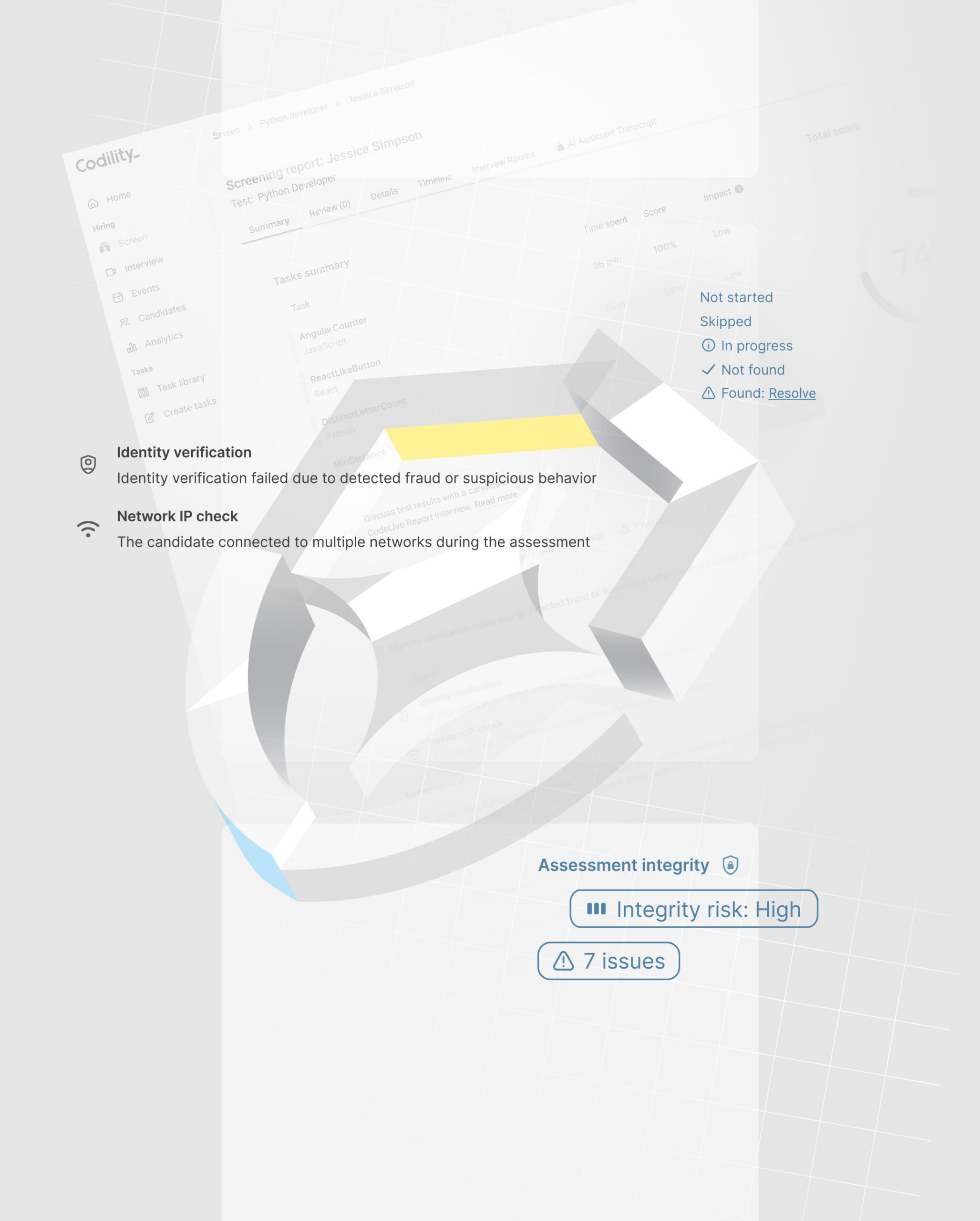

Remote hiring raises a basic but critical question: Is the right person completing the assessment? Codility monitors network and device changes during tests to surface unusual behavior, such as switching environments mid-assessment. Secure invitation controls and ID verification ensure that only intended candidates can access the test, helping teams maintain confidence in candidate identity.

Shared Answers and Leaked Tasks

Once a task appears online, its value quickly degrades. Codility mitigates this risk through careful task design, a large and actively managed task library, and operational processes to retire or replace compromised content.

Raw Signals Don’t Tell the Full Story

Copy-paste events or tab switches during assessments can be concerning, but in isolation don’t give the full picture. What differentiates Codility is how behavioral signals like those are contextualized alongside a structured code evaluation view that shows how a solution evolved, where it succeeded or failed, and which aspects of the problem the candidate truly mastered. Rather than relying on isolated flags or automated judgments, hiring teams get a clear picture of how a candidate approached the task.

Advanced Proctoring When Needed

Some roles, regions, or compliance requirements call for stronger controls. Codility offers advanced proctoring features such as screen monitoring and video recording when higher security is required. These features are fully configurable, allowing organizations to apply a customized level of oversight.

Looking Forward

As AI tools become standard in both assessment and development workflows, the line between legitimate assistance and misuse will continue to evolve.

Our approach at Codility is to provide transparency and control, and to simplify human oversight.

Technical assessment integrity isn’t just about catching AI-assisted cheating. It’s about ensuring that every hiring decision is grounded in accurate, job-relevant data, giving candidates a fair opportunity to demonstrate their capabilities, and building technical teams you can trust.

Learn more about protecting the integrity of your technical assessments with Codility’s AI detection and AI skills assessment features: Book a Codility demo today.