AI can write code now.

Hire the engineers who can build.

Codility Screen delivers standardized technical signal early in the funnel, backed by assessment science that holds up under scrutiny

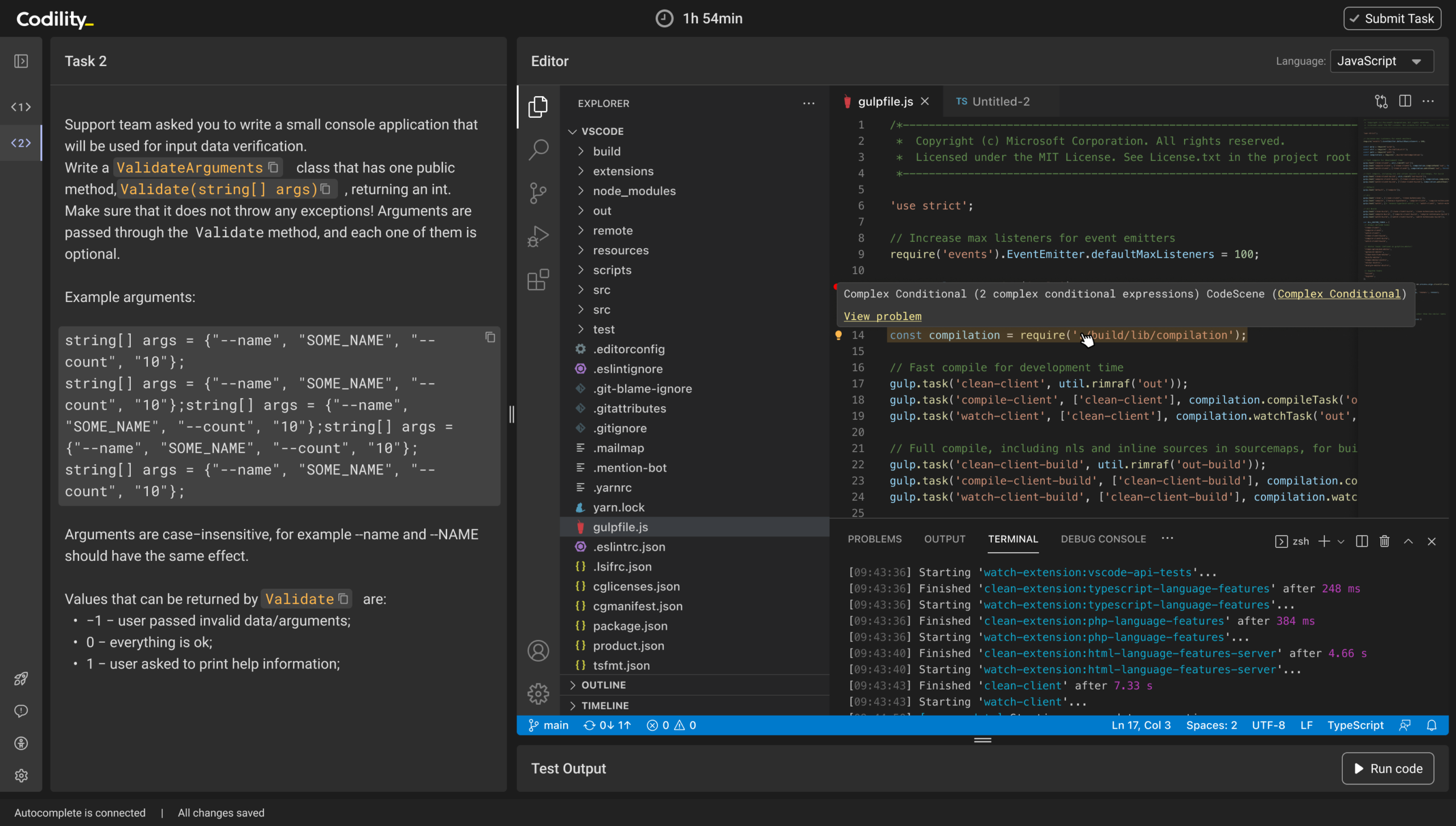

- Real engineering environments: 1,200+ work simulations across 80+ languages and frameworks in a full VS Code workspace

- Configurable AI posture: enable, restrict, or monitor AI use role by role, with every interaction logged and reviewable

- Defensible outcomes: every task validated by occupational psychologists, with adverse impact monitoring at every cut score

Trusted by engineering-first teams worldwide

Why early technical signal is harder than ever

AI-generated submissions blur the signal

Candidates can produce working code without understanding it. Traditional screening cannot distinguish engineers who build from engineers who prompt. You need a way to verify what someone actually knows.

High-volume pipelines need structured evaluation

Ad hoc processes break at scale. When every interviewer runs their own format, signal quality varies across the team and every hire carries different evidence behind it.

Hiring decisions face increasing scrutiny

From the EU AI Act to adverse impact audits, regulators and candidates are asking harder questions about how hiring decisions are made. An undocumented process is a liability.

Generic tools miss what matters

Toy problems and algorithm puzzles measure recall, not engineering ability. They do not predict how someone debugs production code, reviews AI output, or works across a real codebase.

One workflow: configure, assess, decide

Screen combines real engineering environments, layered integrity signals, and documented methodology in a single assessment workflow

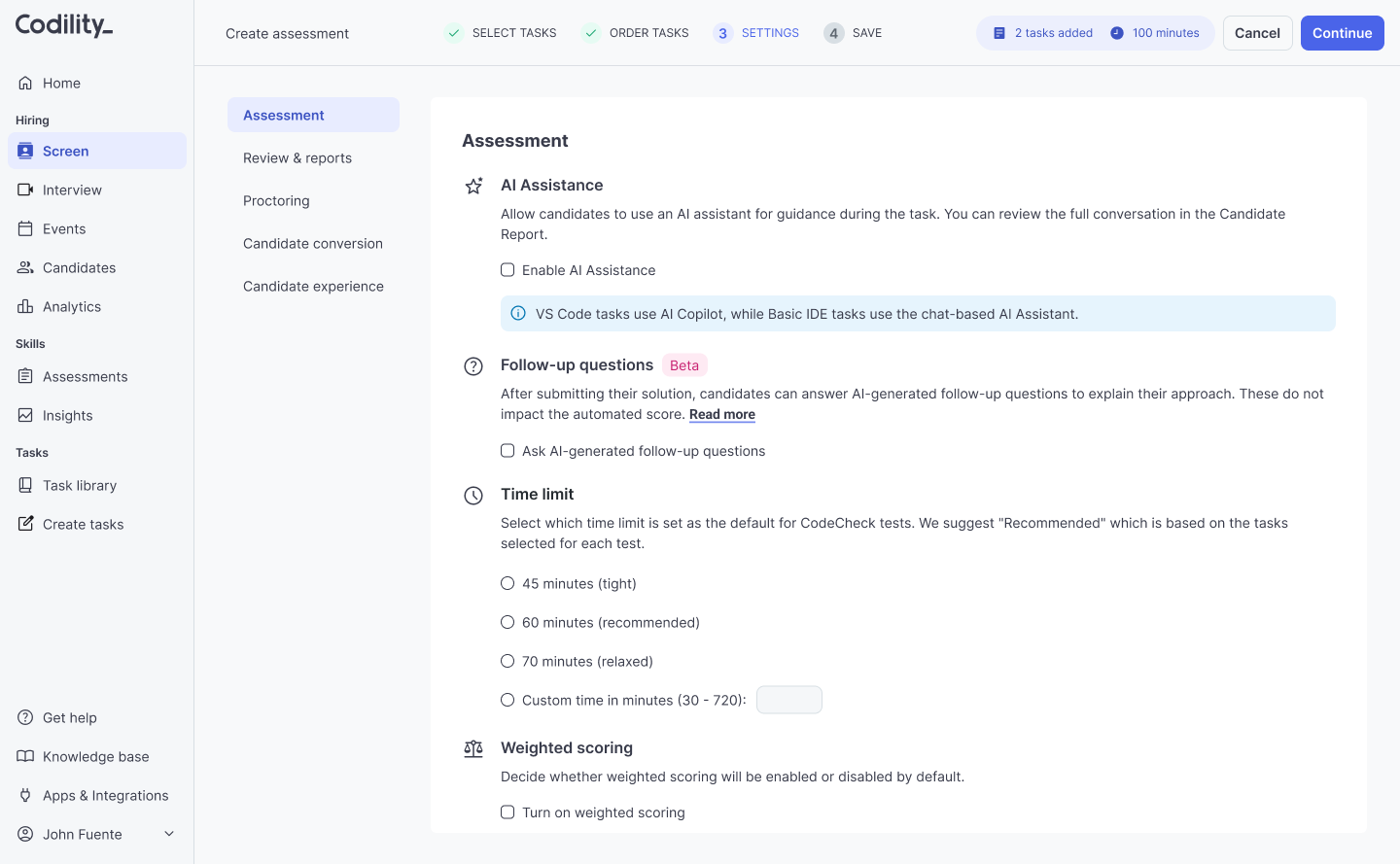

Configure assessments that match real work

Build assessments in a full VS Code environment with terminal access, packages, and multi-file projects. Choose from 1,200+ work simulations mapped to the Codility Engineering Skills Model across 80+ languages and frameworks.

Set AI posture role by role: enabled, restricted, or monitored. Every candidate gets the same environment and the same rules.

Candidates work the way your engineers work

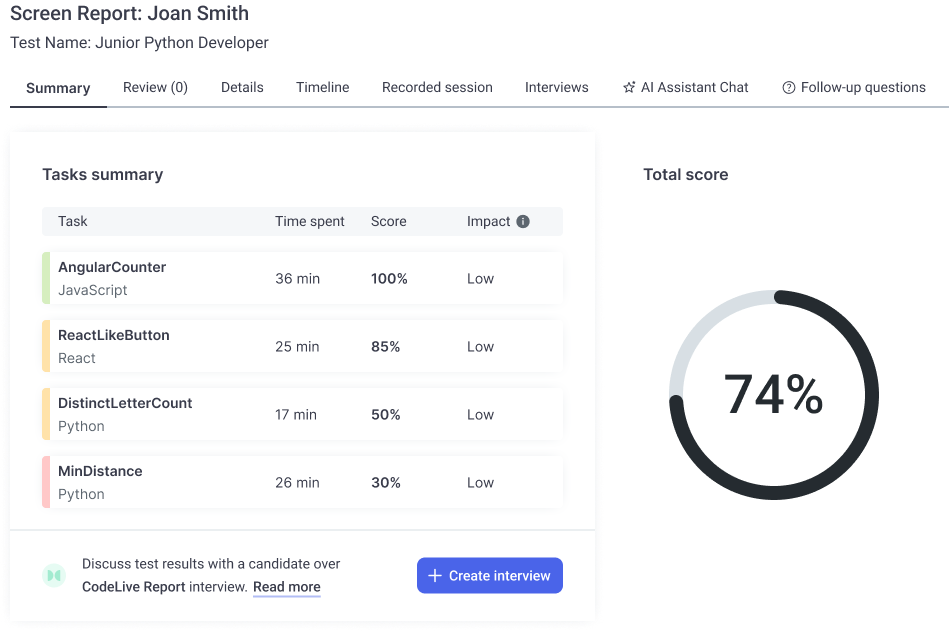

Capture rich, reviewable evidence

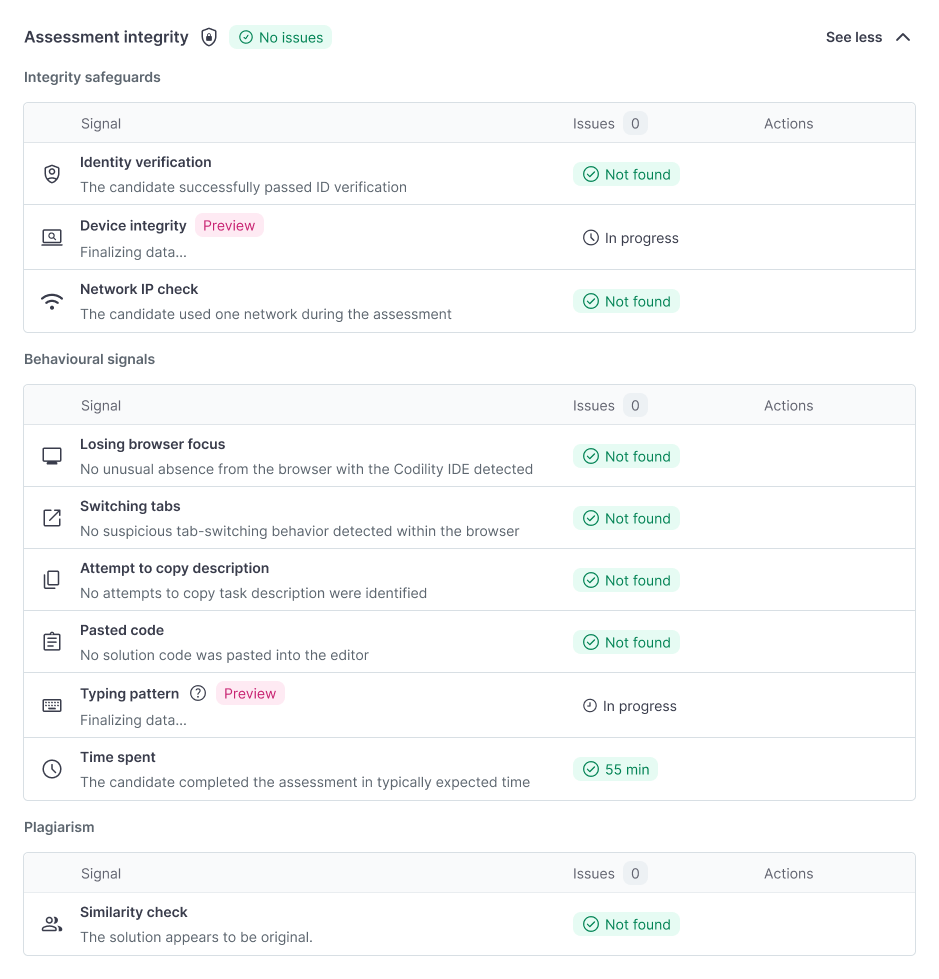

Layered integrity signals track how the work was done: behavioral monitoring, similarity checks, paste detection, and AI follow-up questions that verify whether a candidate understands the code they wrote.

Risk scoring aggregates signals into a clear indicator. Automated code quality analysis measures maintainability, complexity, and structure beyond correctness alone.

Signal on engineering ability, not just output

Decide with a defensible record

Structured scoring backed by documented assessment methodology. Every task reviewed by occupational psychologists. Adverse impact monitored at every cut score against EEOC guidelines.

The cApStAn linguistic audit confirmed 65% of tasks at or below B1 CEFR, designed for global fairness across non-native English speakers.

Decisions that hold up when questioned

What other tools miss

Typical technical screening tools

Codility Screen

Screen is where it starts

Codility extends the same validated methodology from screening through interviews and into your existing workforce

Interview

Structured technical interviews in a shared VS Code environment with sidecar services, whiteboard, and full transcript. Replace ad hoc technical interviews with a repeatable, evidence-backed process.

Skills Intelligence

Map and verify technical capability across the engineering org using the same validated methodology. Staff projects on proven skills, target development where gaps actually exist, and report AI readiness with evidence.